Auditing the State with AI: A Proof of Concept Using CyberPath's Grant Agreement

02 Apr 2026Artificial intelligence is often discussed as a tool that governments may use to monitor or influence citizens. This paper examines a different possibility: whether AI can support citizen-led accountability by extracting public input, structuring policy expectations, and comparing them against what government ultimately funded, designed, or implemented.

I investigate this question through a case study of CyberPath, the Australian Government’s funded pilot for a cybersecurity professionalization scheme. The case is well suited to this purpose because it generated a substantial public record, including expert criticism, community recommendations, research papers, and an official grant agreement. This makes it possible to compare public expectations with the government’s funded outputs in a structured and transparent way.

This study argues that AI can help transform scattered public records into a structured comparison between public input and official output. Once those materials are organized in comparable form, citizens can assess where a policy reflects public concerns, where it only partially responds, and where it remains silent. The contribution of this paper is to show how AI can make this kind of document-based comparison faster, more systematic, and more accessible.

Research Question

How can AI support citizen-led accountability by extracting public input, structuring policy expectations, and performing gap assessments between what citizens said and what government ultimately funded, designed, or implemented?

Methodology

-

Select the case study: I selected CyberPath and its government grant as the case study.

-

Provide the government document for analysis: I used the grant agreement as the primary government output in the case study.

-

Provide relevant public and industry input for analysis: I assembled a corpus of comments, recommendations, critiques, articles, and research papers directly related to the CyberPath grant and the public debate surrounding cybersecurity professionalization in Australia.

-

Perform the gap assessment: I instructed the model to compare the public input against the grant’s stated outputs, milestones, and deliverables in order to identify areas of alignment, partial alignment, and omission.

-

Review and refine the output: I reviewed the model’s classifications against the source material and consolidated them into a final, AI-assisted gap assessment. For the purposes of the analysis, “fully addressed” referred to issues clearly reflected in the grant document, “partially addressed” referred to issues that were acknowledged or weakly reflected, and “missing” referred to issues for which no clear evidence of inclusion was identified in the portions of the grant that were visible in the redacted record. “High significance” referred to issues judged likely to affect the legitimacy, governance, accessibility, or long-term viability of the scheme, while lower significance referred to issues judged less central to those outcomes.

-

Develop recommendations: I used the findings of the gap assessment to develop recommendations for the Australian Government, CyberPath, and cybersecurity professionals.

Case Background

In December 2024, the Australian Department of Home Affairs released a grant opportunity to pilot a cybersecurity professionalization scheme. (1) The proposal triggered substantial debate across the Australian cybersecurity community. Public responses included a scientific paper by Michael Collins (2), a collection of 40 industry perspectives (3), multiple articles written by the author, commentary linked to former AISA board members (4), and a broader set of social media posts and comments from practitioners and stakeholders.

In November 2025, the Australian Government awarded the grant to a consortium led by the Australian Computer Society (ACS). A redacted version of the grant agreement was later obtained through a Freedom of Information (FOI) request and used as the primary government document in this study. (5)

Analytical Setup

The grant agreement and a corpus of relevant industry feedback were analyzed using ChatGPT 5.4 in order to compare public input against official output. The corpus included research papers, public commentary, recommendations, and critiques directed at Home Affairs and organizations involved in the professionalization process, including ACS, AISA, AWSN, and Aus3C. The objective was to identify areas of alignment, partial alignment, and omission.

Corpus

The source corpus used in this study consisted of the following core documents and source categories:

- the CyberPath grant agreement;

- Understanding First, Solutions Second by Michael Collins;

- Reconsider the Australian Government’s grant for professionalising the cybersecurity industry by Benjamin Mosse;

- Listening Before Leaping: AISA’s Cautionary Path on Professionalisation by Benjamin Mosse; and

- supplementary public commentary and online discussion directly related to CyberPath and the broader Australian professionalisation debate.

The corpus was assembled by the author and is therefore partly shaped by the author’s selection decisions. It should not be understood as a complete representation of all possible public views, but as a defined source set used to test the feasibility of AI-assisted gap assessment in this case.

The principal documents used in the analysis are listed in the references and associated supplementary materials.

Limitation

The analysis was conducted on a redacted version of the grant agreement. For that reason, the results are not interpreted as proof that Home Affairs did or did not consider industry feedback.

The purpose of this study is narrower. It is to evaluate the feasibility and analytical usefulness of AI-assisted gap assessment in the context of citizen-led accountability.

The results are also shaped by the choice of source materials, the prompt design, the model used, and the author’s review of the final classifications. Different source selections, prompts, or models may produce different outputs.

Because the corpus was assembled by the author and includes materials written or curated by the author, the study is exposed to selection and framing effects. The findings should therefore be interpreted as a proof of concept for the method rather than as a neutral or exhaustive reconstruction of all possible public input.

The primary contribution of this paper is methodological rather than adjudicative: it does not attempt to determine whether Home Affairs acted properly or improperly, but to demonstrate a structured AI-assisted method for comparing public input against official output.

CyberPath is therefore used in this paper as a case study for demonstrating the method, not as the sole or final basis for judging the merits of professionalization policy in Australia.

Validation

No formal inter-model or repeated-run validation was conducted in this study. For that reason, the paper does not claim that the specific output reported here would necessarily be reproduced in identical form across different runs, prompts, models, or coding strategies. The study should therefore be read as demonstrating analytical feasibility rather than methodological reliability. Future work should test whether similar gap patterns are produced across repeated runs, alternative models, and independent reviewers.

Findings

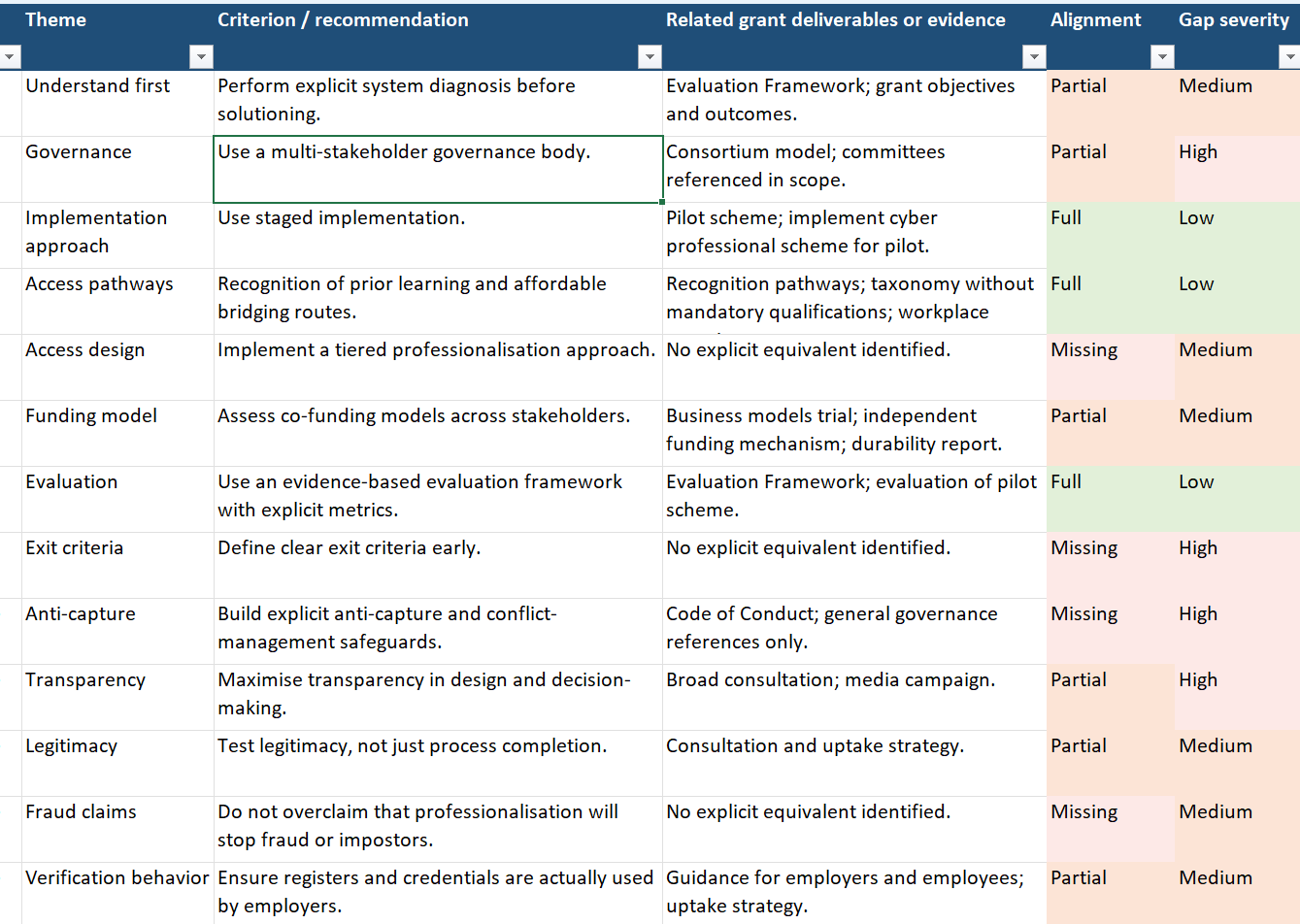

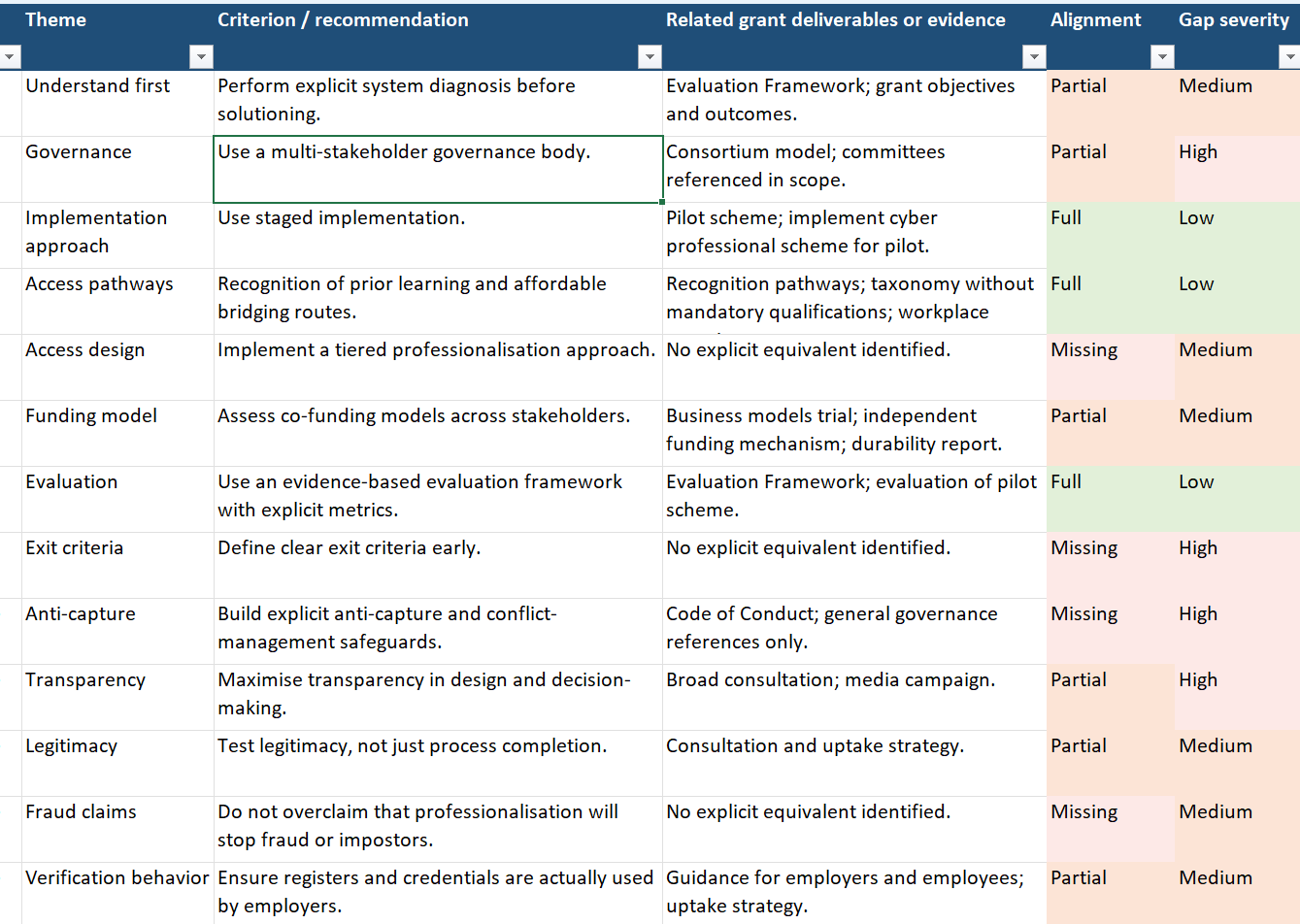

A total of 28 evaluation criteria were generated by asking the model to extract recurring themes, recommendations, and concerns from the source corpus. Overlapping items were then merged, and the resulting set was reviewed by the author against the source material before being used in the final comparison.

The AI-assisted gap assessment then applied those 28 evaluation criteria to the source materials included in the study.

Of these, 4 were assessed as fully addressed, 15 as partially addressed, and 9 as missing. In other words, most of the feedback reviewed in this study was either only partly reflected in the grant design or not clearly reflected at all.

The pattern of results was uneven. The grant appeared comparatively more responsive in areas such as pathways, recognition routes, pilot design, and the existence of an evaluation component. These are the areas where the grant most clearly aligned with at least part of the public input. By contrast, the grant appeared weaker in areas relating to governance, anti-capture safeguards, formal risk assessment, business-case rigor, exit criteria, operating and enforcement capability, and protection against gatekeeping effects.

The assessment also found that 14 of the 28 criteria were of high significance. Among these, 6 were both high significance and missing, while 8 were high significance and only partially addressed. This result is notable. It suggests that the main gaps were not peripheral design details. They were concentrated in issues that appear central to the legitimacy, governance, and long-term viability of the scheme.

The additional material from the industry feedback paper and AISA’s published position strengthened this pattern rather than changing it. Both sources reinforced concerns about mixed industry support, conflicts of interest, insufficient consultation, weak evidence for return on investment, gatekeeping risks, and the possibility that professionalization may not solve the workforce problems it claims to address. In the gap assessment, these concerns were usually assessed as either missing or partially addressed, not fully resolved.

Taken together, the results suggest that the AI-assisted method was able to recover a clear structure from a large and fragmented documentary record. It identified areas where the grant aligned with public expectations, but also revealed repeated omissions and unresolved tensions. The value of the method, therefore, was not that it produced a final judgment on the policy. Rather, it made the relationship between public input and government output more visible, more structured, and more open to scrutiny.

Download the Gap Assessment

The full gap assessment spreadsheet is provided as a supplementary research file. Minor cosmetic adjustments were made to improve readability and presentation, but the underlying analytical content was not modified.

Reproducibility

A key strength of the method demonstrated in this study is that it is reproducible in principle. Researchers, journalists, citizens, and civil society groups can apply it using the AI system of their choice, provided that the source materials, prompts, and comparison criteria are clearly documented.

The process is straightforward: collect the relevant source materials, load the government output and public input into the model, and instruct the model to perform a structured comparison using explicit gap categories such as full alignment, partial alignment, and omission.

This makes the method more transparent, more repeatable, and more accessible beyond this case study.

Discussion

This study suggests that AI can support citizen-led accountability in a practical way. In this case, it was able to organize a large documentary record and compare public input against government output. This lowers the time and effort required for structured scrutiny.

AI helps turn scattered material into a structured representation of what people said, what they recommended, and what government produced. This makes omissions more visible and easier to assess systematically.

The CyberPath case shows why this matters. The main gaps were not small technical issues. They were concentrated in major questions such as governance, conflicts of interest, evidence, risk assessment, and barriers to entry. These are exactly the kinds of issues that can disappear inside long policy processes unless someone has the time and skill to reconstruct them.

At the same time, the method has limits. A gap assessment cannot prove motive, misconduct, or bad faith. It can only show whether the public record appears aligned, partly aligned, or silent. Its strength is not that it replaces judgment, but that it makes better judgment possible.

The broader significance of this study is that it points to a different use of AI. Much of the public debate focuses on governments using AI to monitor citizens. This paper shows that AI can also be used in the opposite direction: to help citizens scrutinize government action. In that sense, AI can be used not only for control, but for accountability.

What this means for CyberPath:

When ACS publishes its full approach, the same method can be applied again at far greater scale. Researchers and members of the industry will then be able to download the released materials, combine them with the feedback record, and use AI to produce a structured gap assessment quickly and reproducibly.

That changes the accountability environment around CyberPath. It means that the volume of material no longer prevents structured comparison and public scrutiny. A large consultation process, hundreds of submissions, long reports, and sprawling public debate can still be compared against what is ultimately designed and implemented.

Most importantly, it increases the practical visibility of every contribution. Submissions, articles, posts, and serious comments can all become part of the accountability record. AI makes it possible to recover those voices, structure them, and test whether they were reflected in the final scheme.

Recommendations

Recommendation 1: Test AI-assisted gap assessment as a repeatable accountability method

Government agencies, grant recipients, journalists, and civil society groups should test AI-assisted gap assessment as a repeatable method for comparing public input with policy output.

This would make it easier to test whether consultation processes are meaningful, whether expert criticism is addressed, and whether funded programs remain aligned with the concerns raised by affected communities.

Recommendation 2: Treat public input as auditable evidence, not symbolic consultation

Policymakers and delivery partners should assume that public submissions, articles, comments, and critiques may later be structured and compared against the final output. This creates a stronger standard for consultation.

Public input should not be collected as a symbolic exercise, but treated as evidence that can be traced, compared, and systematically assessed.

Recommendation 3: Extend the method beyond CyberPath’s grant agreement

The method demonstrated in this study could be tested on other grants, consultations, procurement decisions, and public policy programs. This would help determine whether AI-assisted civic accountability is useful only in exceptional cases or can become a broader democratic tool.

Recommendation 4: Combine AI-assisted scrutiny with human review

AI should be used to organize, compare, and surface gaps, but important judgments should still be reviewed by humans. This preserves context, reduces misinterpretation, and keeps the accountability process rigorous.

Conclusion

This study suggests that AI can support citizen-led accountability in a practical way. It also suggests that the method may be reproducible when the source materials, prompts, and comparison criteria are clearly documented.

The claim advanced here is therefore limited: the study demonstrates the feasibility of the method in one case, rather than establishing its reliability across all contexts.

In the CyberPath case, the method was used to extract public input, structure policy expectations, and compare them against what government ultimately funded. The result was not a final judgment on the policy, but a clear and structured view of where alignment existed and where important gaps remained.

The broader significance of this work is methodological. It shows that citizens, journalists, researchers, and civil society groups can use AI to audit the relationship between consultation and implementation at scale. This does not prove motive or misconduct. But it does make government action easier to inspect, compare, and challenge.

The contribution of this paper is therefore best understood as a proof of concept for AI-assisted civic accountability. In that sense, AI can be used not only to extend state capacity, but also to strengthen public oversight. If applied carefully, this method offers an important inversion: not AI primarily as a means of observing citizens, but AI as a tool that can assist citizens in scrutinizing government.

References

-

Growing and Professionalising the Cyber Security Industry Program, Australian Government, December 2024

-

Understanding First, Solutions Second : A Systems Thinking Analysis of the Proposed Australian Cybersecurity Professionalization Scheme, Michael Collins, 2025

-

Reconsider the Australian Government’s grant for professionalising the cybersecurity industry, Benjamin Mosse, January 2025

-

Listening Before Leaping: AISA’s Cautionary Path on Professionalisation, Benjamin Mosse, May 2025

-

Grant Agreement between DISR and ACS, Australian Government, November 2025